Modern microservice applications produce an incredible amount of telemetry data. The data volume has risen exponentially, yet MTTR has remained constant. Of course, without all that telemetry data, resolving incidents would be a near-impossible task. However, something has got to give. Organizations cannot continue to collect an ever-increasing volume of telemetry data as application complexity continues to increase, with AI just accelerating that increase. The idea that you cannot have too much data no longer holds true because the technical and financial burdens are just too great. Because collecting everything “just in case” is no longer a valid strategy., a new era of telemetry data efficiency is emerging.

How Much Telemetry Data Does a Microservice App Generate?

There are some useful rules of thumb that provide a useful insight into just how much telemetry data is generated by a modern microservice application.

Looking at tracing data, which is made up of multiple spans produced by each service as it processes a request, the rule of thumb to estimate the number of spans for each request served is:

Spans ≈ services x 4.5

System architectural choices can increase this figure. Object-relational database abstraction frameworks can be very noisy, and some message queues create a lot of spans for each message processed.

Typically, a span is 2kB, and 100 spans per request is a healthy level of instrumentation. However, many applications produce much more than this. Assuming a healthy level of instrumentation and a load of 50 requests per second, the astonishing total is 26 petabytes bytes of trace data per month.

For log data, the rule of thumb for the number of log events for each request is:

Log events ≈ services x 8

With the same load as used for the spans calculation results in 23 million log events per month. Typically, a log event is 512 bytes in size, giving a total data volume of 12 petabytes per month.

What is really shocking is doing a comparison with the volume of application data generated for the same load. Modern applications typically serve JSON data in response to a request, which is then rendered by a web or mobile application. For the purpose of the comparison, each request returns 1kB of JSON data. The volume of application data served over one month is 132 gigabytes. Which is a lot lower than the telemetry data volume total of 38 petabytes. This is not sustainable.

Why Filtering Rules Fail to Control Observability Costs

Most organizations are becoming aware of the problem of ever-expanding telemetry data volumes, primarily because of the significant platform costs of Datadog, New Relic, Splunk, etc. To be fair, those platforms do provide some tools that enable their customers to attempt to limit the volume of telemetry data. Those tools take the form of filtering rules that decide which data to let through and which to drop. However, this creates two challenges. First, those rules must be manually created and maintained, which consumes valuable engineering resources. Secondly, the fear of missing out, driven by Murphy’s Law. There is always that nagging doubt that the data dropped by the rules will be exactly what is needed to resolve an incident. Out of the frying pan into the fire.

How Intelligent Telemetry Sampling Reduces Data Volume

The crux of the problem is:

Some of the data is useful all of the time. All of the data is useful some of the time. All of the data is not useful all of the time.

Unfortunately, there is no way of knowing in advance which data will be useful when. Hence the reason why organizations continue to send massive amounts of telemetry data to observability platforms and, damn the expense, “just in case” it is required.

For log data, the Grepr intelligent observability data engine automatically identifies frequently occurring log events, which are summarized and passed on. Infrequent log events, typically errors, get passed straight through, ensuring health rules are triggered.

For trace span data, Grepr buffers the spans to build a complete end-to-end trace. Each trace is then fingerprinted by analyzing the pattern of flow across services. Each unique trace fingerprint has its baseline performance calculated. Sampling rules are then applied e.g. 100% errors, 50% very slow, 25% slow and 1% default. Because all the spans are buffered to build a complete end-to-end trace for analysis, when a sampling rule is matched, the entire trace is sent through. The result is that the engineers always have a complete end-to-end trace with zero missing spans, providing complete fidelity.

No data is lost; it is retained in low-cost, queryable storage “just in case” it is needed later to assist in incident diagnosis. Retained telemetry data can be selectively backfilled to the observability platform, triggered via webhook from a health alert.

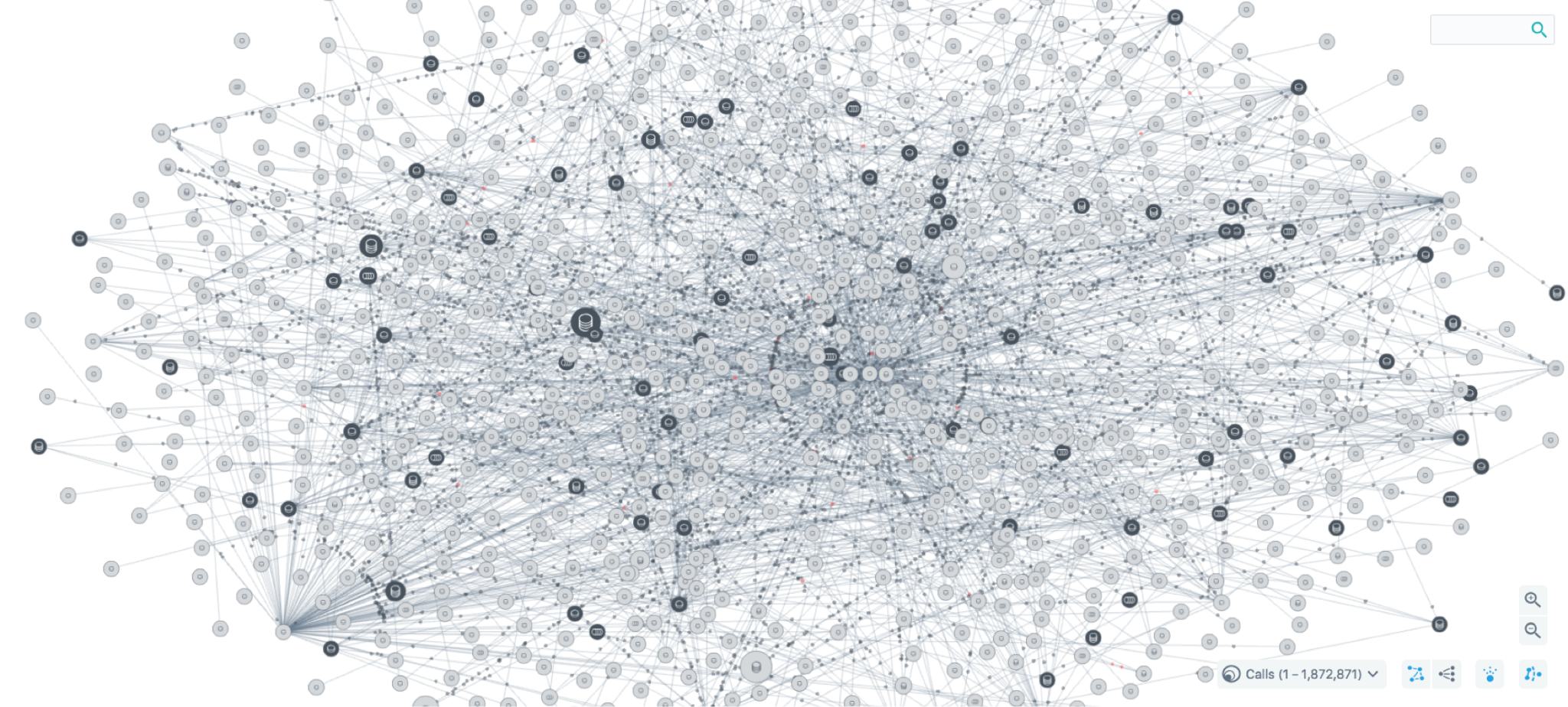

The Grepr intelligent observability data engine continually maintains an active set of pattern rules and trace fingerprints, 200,000 on a busy system, resulting in a 90% or more reduction in data volume sent through to the observability platform; a huge improvement in data efficiency.

Grepr fits in like a shim between the observability agents and the platform backend. The agents are reconfigured to send the data to Grepr, where it is pre-processed before continuing to the platform backend with minimum latency. There is zero disruption to the engineer’s workflows; they continue to use the dashboards they are familiar with, only now with a refined dataset, increasing the signal-to-noise ratio.

Reduce Your Observability Costs by 90% with Grepr

For teams that want to improve telemetry data efficiency without overhauling existing workflows, Grepr delivers results from day one. There's no complex installation, no manual pipeline maintenance, and no second set of dashboards competing for engineers' attention.

The existing agents, dashboards, and alerting rules stay exactly where they are. Grepr works alongside them, automatically optimizing data volume while retaining everything in low-cost storage for when it is needed.

Schedule a demo to see how Grepr's Intelligent Observability Data Engine can reduce your Datadog costs by 90% or more, with zero disruption to your current workflows.

More blog posts

All blog posts

What We Heard at Observability Summit 2026

.png)

Sawmills vs Grepr: Telemetry Pipeline Comparison for SREs

.gif)