Goldsky is Web3's realtime data platform. They help developers build dApps faster through high-performance blockchain indexing, instant subgraphs, and custom data streaming pipelines.

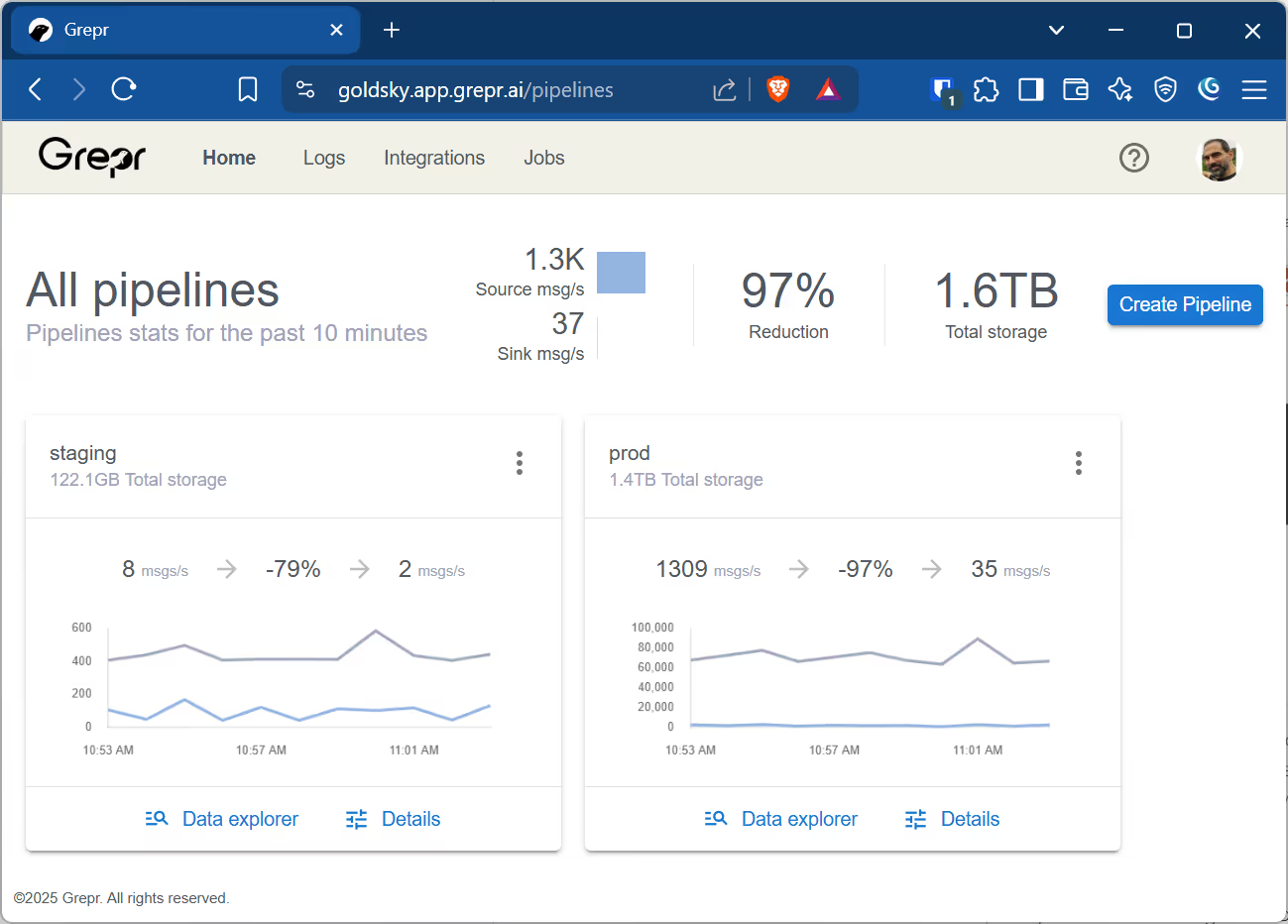

About six months ago, their team came to us with a familiar problem: log volumes had gotten out of hand, and the costs no longer matched the value. They were collecting and storing far more than they actually needed. We deployed Grepr shortly after that initial conversation, and within weeks, their Datadog logging bills dropped by 96%.

Paymahn Moghadasian, Lead Engineer at Goldsky, handled the deployment.

How the Rollout Worked

Goldsky manages their infrastructure with Terraform across separate staging and production environments, including their Datadog agents.

Paymahn started in staging. He created a pipeline and pointed the Datadog agents to Grepr in about 20 minutes. Volume there was light, around 8 messages per second, but even at that scale, Grepr achieved roughly 80% reduction.

He let it run for a week to build confidence before moving to production.

Production required more care. Rather than cutting over all at once, Paymahn used Datadog's dual-shipping capability. This let him add Grepr as a destination while still sending logs directly to Datadog, so nothing was at risk during the transition.

Here's the approach he took:

- Enable dual shipping in Datadog for logs

- For each service, add a filter in Grepr to drop all logs except the one being migrated

- Once that service's logs flow through Grepr correctly and show up in Datadog, add a Drop Rule to stop logs for that service that aren't coming from Grepr

- Tune the setup with exceptions as needed to preserve existing alerts and dashboards

- Run for a day to validate

- Move to the next service

- Optionally update alerts or dashboards to take advantage of summarized data instead of raw data

- After two weeks of validation, turn off dual-shipping from the agents

The full process took four weeks from start to finish.

The Numbers

For May 2025:

Indexed Logs: 5.7 billion messages dropped to 250 million, a 96% reduction

Ingested Logs: 12 terabytes dropped to 795 gigabytes, a 93% reduction

The dollar savings matched. After accounting for Grepr's costs, Goldsky cut their overall Datadog logging spend by more than 85%.

What About Troubleshooting?

When we asked Paymahn about impact on mean time to resolution, he gave us two words: "no impact."

His team actually found that with the noise filtered out, logs became easier to read and understand than before.

Other Wins

Time back for building: By solving the log cost problem quickly, Goldsky freed up engineering time for their actual product.

Historical search without rehydration: They could search logs across multiple months without paying extra to rehydrate archived data.

Cleaner signal: Less noise meant faster comprehension when reading through logs.

In Paymahn's Words

"Grepr's immediate, high-touch support was excellent. We always felt taken care of."

"The UI works well for what we need. It's not trying to compete with Datadog's UI, and that's fine."

"Grepr was always up and available."

"Logs arrived at Datadog with some minimal added latency, but nothing that mattered in any real way."

And his final take:

"Grepr allowed us to keep all our established observability use cases and processes intact by essentially getting rid of the noise in the data. Lower costs without any retraining."

More blog posts

All blog posts.gif)

How to Reduce Telemetry Data Costs Without Losing Coverage

How to Retain Raw Telemetry Data for HIPAA Compliance Without Breaking Your Budget

.png)